OpenAI backlash puts fresh focus on Oura’s Pentagon ties

OpenAI’s Pentagon deal sparked a user backlash this week, with many people uninstalling ChatGPT after the news broke. That same privacy concern now puts Oura’s Department of Defense ties in the spotlight, as the smart ring maker holds a defense contract worth nearly $100 million.

That does not mean the two situations are identical. OpenAI was hit by a fast public backlash tied to a fresh headline. Oura’s DoD ties have been sitting in the background for longer. But the overlap is obvious enough to matter. In both cases, the company sits close to highly personal data. In both cases, users are left asking the same basic question, how separate is my everyday product from the government facing side of the business?

Why this matters now

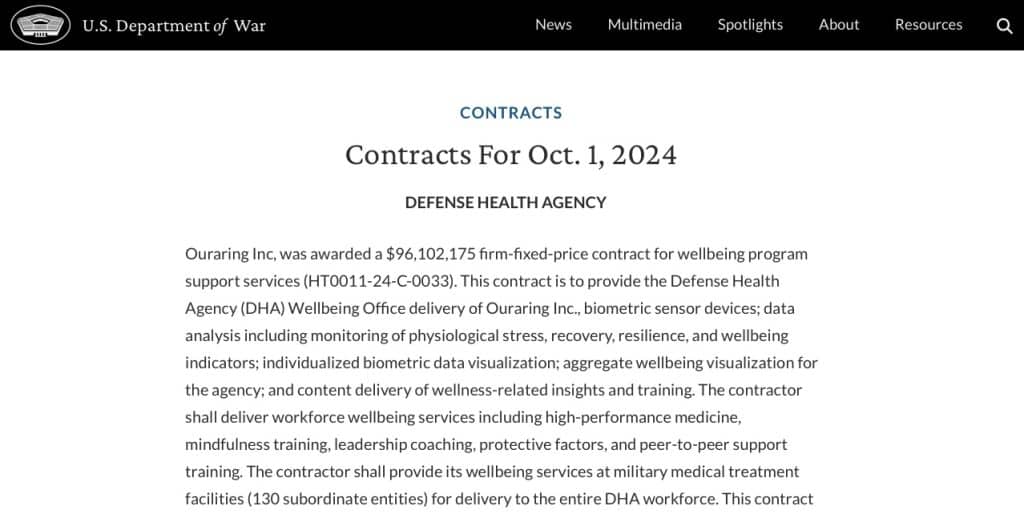

Oura’s defence connection is not a minor pilot or a vague promise of future cooperation. The clearest official record is the October 2024 Defense Health Agency contract awarded to the company.

The contract value was put at $96.1 million and the scope went beyond devices alone. It’s about Oura supplying a broader stack that mixes hardware, software and analytics. Once readers understand that, the DoD link starts to look less like a side project and more like a proper business line.

Oura itself has reinforced that impression. In 2025 the company said the DoD was its largest enterprise customer and tied the relationship to an expanding US manufacturing footprint in Fort Worth, Texas, with operations projected for 2026. When a company starts shaping production around defence demand, it tells you this is not a tiny contract parked off to one side.

What Oura says about your data

This is where the story gets more delicate. We have not seen evidence that ordinary consumer Oura data is being fed into the DoD. In fact, Oura has publicly said the opposite. In its privacy messaging, the company says member data is not for sale and will never be sold or rented to the government. It also says consumer data does not touch its DoD only offering unless the person is a service member enrolled in a relevant program and has consented to share that data.

That is an important distinction and it should not be blurred for clicks. Still, it does not make the story go away. Consumer trust is not shaped only by whether data is technically segregated. It is also shaped by perception, by the company you keep and by how comfortable users feel supporting a brand that works closely with defence agencies. The ChatGPT backlash showed how fast that discomfort can turn into real user action.

There is also the Palantir angle, which helped stir confusion around Oura last year. Oura’s defence messaging referenced Palantir FedStart as part of an IL5 ready hosting environment for government deployments. Later reporting suggested this was not some sweeping strategic partnership but rather a more limited commercial relationship tied to secure infrastructure requirements. Even so, the Palantir name adds fuel to the discussion. People tend to read it as shorthand for surveillance, whether that is fair in each case or not.

The bigger issue for wearables

The more interesting angle here is not whether Oura has done something wrong behind the scenes. But where wearable tech is heading. Devices that started out as personal wellness tools are slowly showing up in places like workforce monitoring, military readiness programs and large institutional health projects.

It is also worth noting that Oura seems to be the only smart ring company with such a connection. Other wearables brands have worked with military research or training programs over the years, but in the smart ring space Oura appears to be the only one with a formal contract and a platform built for government deployments. That makes it a bit of an outlier in the category.

And that shift can create a bit of a trust hurdle. Most people buy a smart ring to track sleep, readiness or recovery. It feels like a personal gadget, something you use for your own health. That feeling can start to change once the same platform also shows up in systems designed for resilience tracking, performance monitoring or wider wellbeing programs inside big organisations. And there is evidence some people are swapping their Oura Rings for other options because of this.

For Oura, the ChatGPT development probably means the defence side of the business will be harder to keep in the background. The company says consumer data is protected and kept separate from its government programs. That may well be enough for a lot of users. The OpenAI situation shows how quickly people can start asking questions once a Pentagon connection enters the picture.

Subscribe to our monthly newsletter! Check out our YouTube channel.

And of course, you can follow Gadgets & Wearables on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.